ITAM Isn’t Failing Because You Don’t Care

Most mid-market IT teams already understand that IT asset management matters. The challenge is rarely awareness — it is capacity.

When you are part of a lean team supporting 800 to 2,000 employees across on-prem infrastructure, Azure workloads, and a constantly expanding SaaS stack, formal ITAM best practices can feel disconnected from daily reality. You are managing tickets, overseeing patch cycles, responding to leadership requests, and handling whatever surfaced that morning. Asset governance often becomes something you intend to refine rather than something you have sustained time to operationalize.

The ITAM frameworks that many organizations inherited were designed for enterprises with dedicated asset management roles and layered governance. Mid-market environments operate differently. Responsibilities overlap. Tooling evolves quickly. SaaS adoption frequently expands outside centralized procurement. Hybrid infrastructure has become the norm rather than the exception.

In that context, recurring ITAM friction is less about negligence and more about visibility.

Our benchmark research shows that 74% of endpoints carry critical vulnerabilities. Separate utilization analysis indicates that 97% of servers operate at less than 25% capacity. Software overspend routinely falls in the 25–30% range. These numbers do not suggest inattentive IT teams. They reflect fragmented data and delayed awareness.

When asset, license, cloud, and vulnerability data reside in separate systems, drift accumulates gradually. The environment changes daily, but awareness lags behind it. Issues surface once they become urgent rather than when they are manageable.

The objective is not reducing tool count, but reducing blind spots across the environment.

Mistake #1: Waiting for the Crisis to Tell You What’s Wrong

Reactive IT asset management rarely announces itself in advance. For long stretches, everything appears stable. Systems are running. Renewals are months away. Security scans show manageable findings.

Then a specific trigger forces attention: an audit notice with a compressed response window, a vulnerability scan exposing hundreds of endpoints, or a renewal discussion revealing unexpected licensing costs.

In those moments, the team shifts from planning to triage. The scramble is not the result of carelessness; it reflects incomplete cross-environment visibility.

Teams with structured software license audit processes tend to understand their exposure before an audit notice arrives, reducing the urgency of compliance conversations.

Most mid-market IT teams already operate multiple systems. Endpoint management tracks device posture. Azure consoles provide cloud telemetry. A SaaS management tool may capture part of the subscription landscape. Configuration data lives in a CMDB. Security scanning operates independently. Renewal schedules are maintained in spreadsheets.

Each tool performs its intended function, but together they fail to provide a unified operational view.

Without continuous reconciliation across SaaS, cloud, hardware, and vulnerability data, inefficiencies remain hidden. Inactive licenses renew automatically. Temporary cloud resources persist beyond project completion. Vulnerabilities remain unresolved because ownership is unclear. Underutilized servers continue consuming budget without visibility into optimization opportunities.

A structured software license audit process reduces exposure during renewals and compliance reviews, but audit readiness alone does not solve fragmentation. The deeper issue is timing. When visibility is delayed, awareness arrives through disruption rather than through routine monitoring.

A unified discovery layer changes that timing. When SaaS, cloud, endpoints, and infrastructure data are continuously consolidated, signals surface earlier. Overspend becomes visible before renewal negotiations. Vulnerabilities are contextualized against live asset inventories as they emerge. Cloud underutilization can be addressed before budget planning cycles lock in inefficiencies.

Proactive IT asset management does not eliminate risk. It shortens the interval between change and response.

Mistake #2: Confusing Tool Volume with Visibility

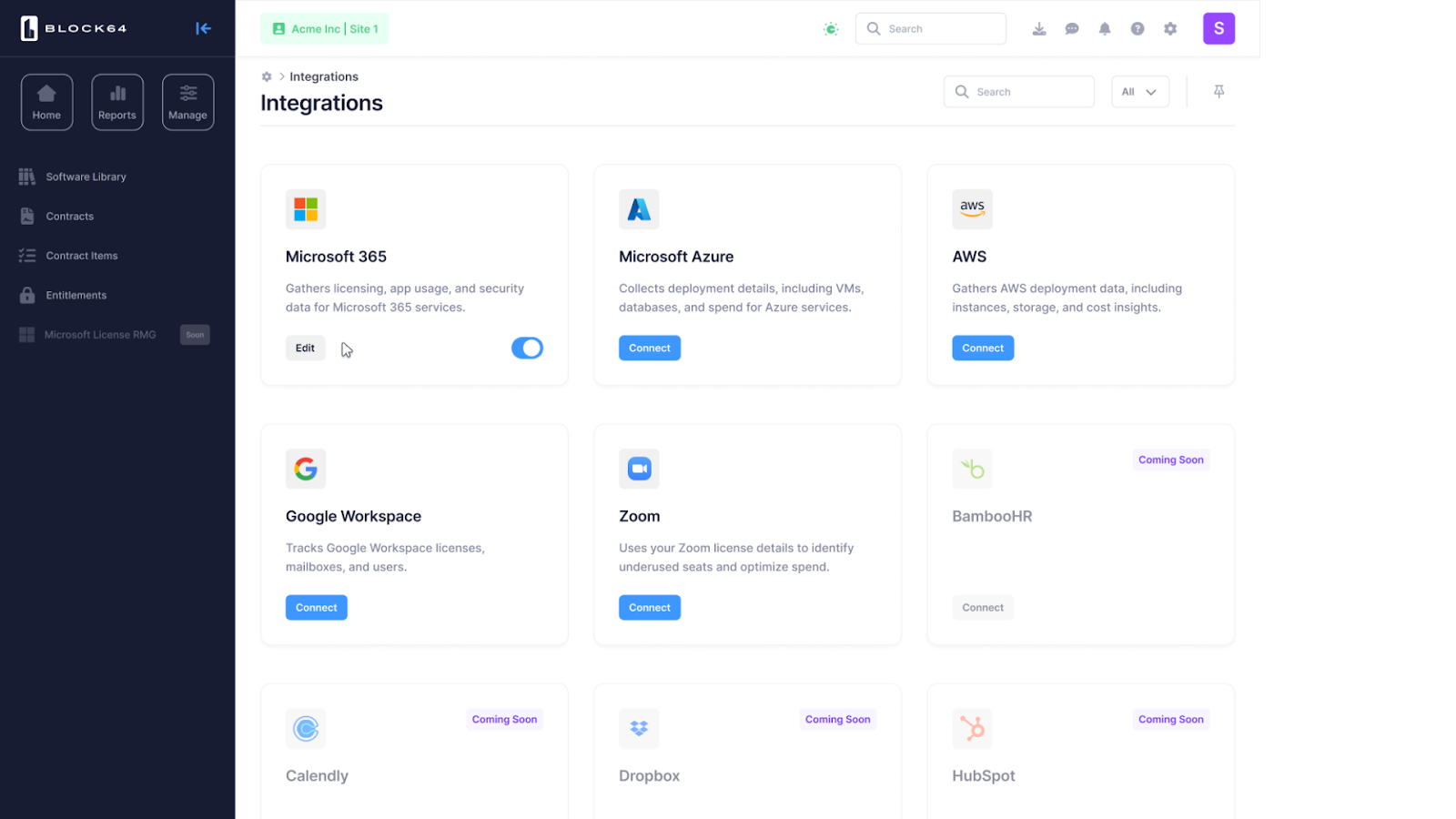

Mid-market IT environments tend to accumulate tools incrementally. A cloud console is necessary. Endpoint management is mandatory. Security scanning is non-negotiable. SaaS management platforms are introduced to address subscription growth. Over time, dashboards multiply.

The assumption is that broader tool coverage produces stronger control.

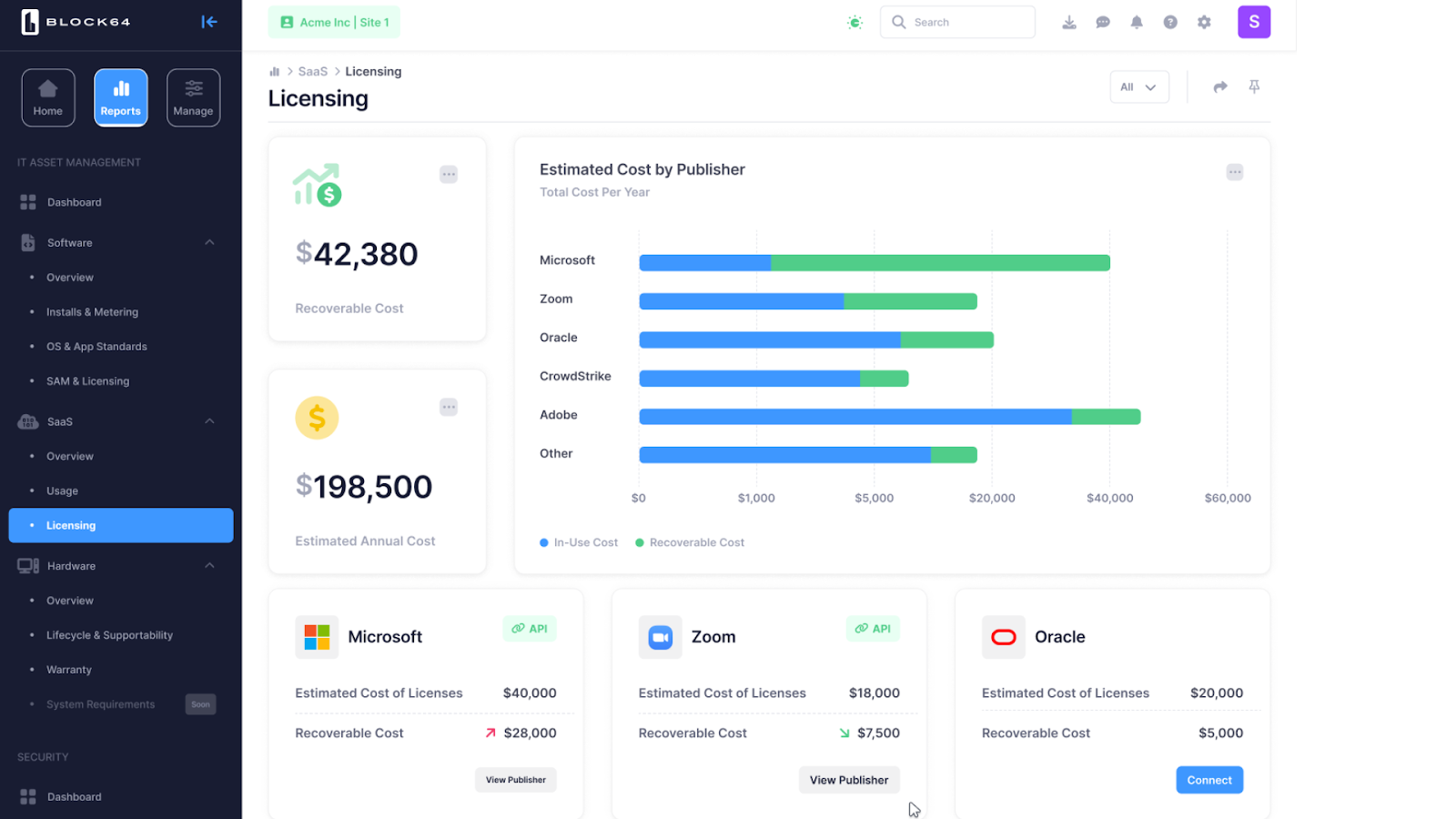

In practice, fragmentation increases complexity. Each tool answers a narrow question but leaves broader cross-environment questions unresolved. How many SaaS licenses are inactive across departments? Which cloud workloads consistently operate below 25% utilization? Where do high-severity vulnerabilities intersect with unmanaged devices? Which upcoming renewals align with actual usage patterns?

Answering those questions often requires exports, manual reconciliation, and spreadsheet stitching.

The 97% server underutilization statistic illustrates how inefficiency can persist even in environments with monitoring tools in place. Utilization data may exist within individual consoles, but without cross-platform aggregation, it rarely informs strategic decisions. The same dynamic underpins the 25–30% overspend benchmark. Visibility is partial rather than unified.

Security posture suffers under similar conditions. When asset data is dispersed across siloed systems, uncertainty increases. Even well-resourced security teams struggle to manage exposure when no single view aligns assets, vulnerabilities, and ownership.

Full platform replacement is rarely realistic for mid-market organizations. Disruption costs are real. Retraining consumes limited bandwidth. Procurement cycles slow operational momentum.

A more sustainable approach centers on integration rather than consolidation. When a unified SaaS visibility layer overlays existing tools and continuously connects SaaS, cloud, hardware, and vulnerability data, individual systems become more actionable. Reports reflect current state rather than stitched snapshots. Renewal planning aligns with consumption data. Security findings connect directly to asset context.

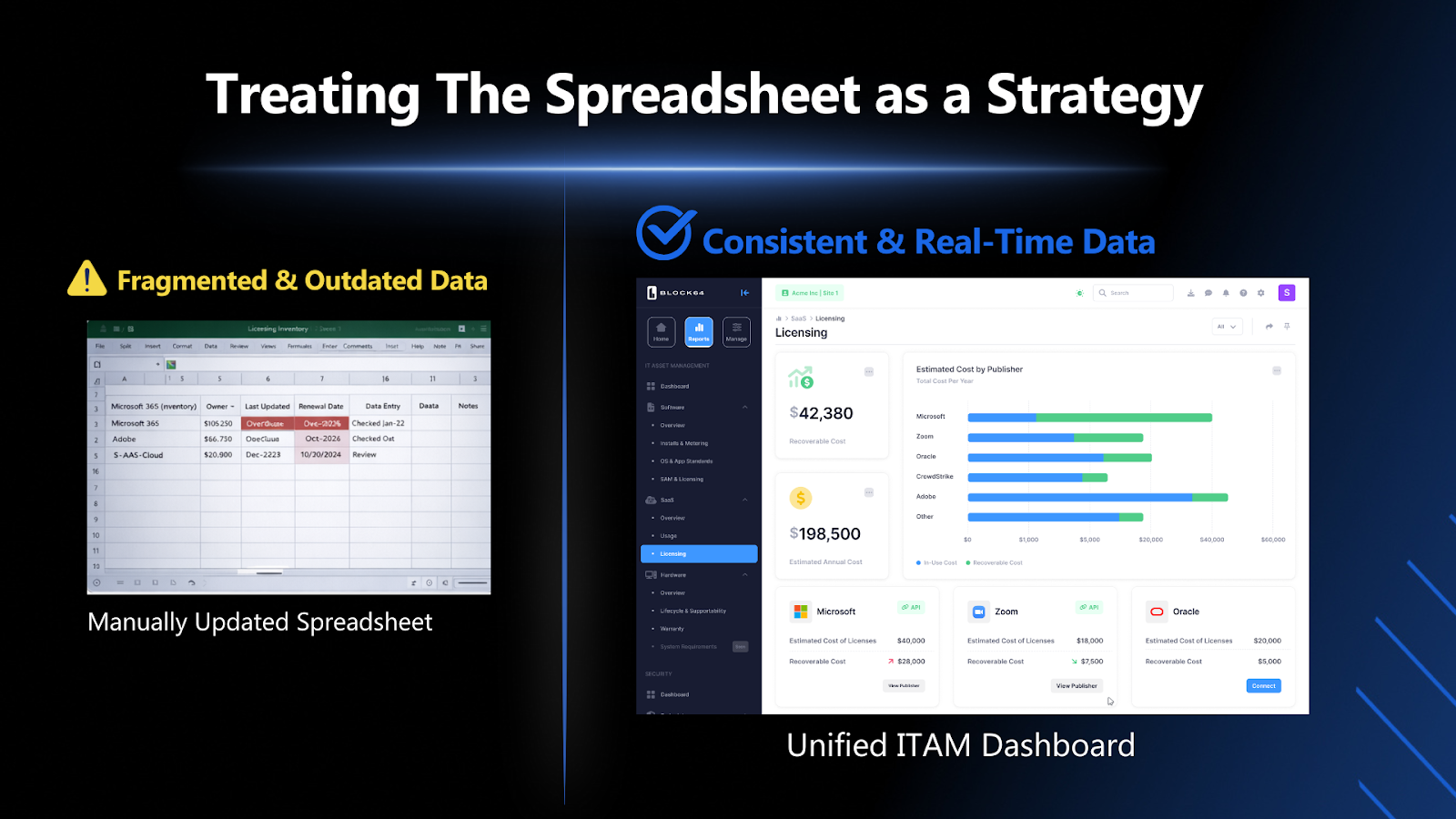

Mistake #3: Treating the Spreadsheet as a Strategy

Spreadsheets remain one of the most common ITAM control mechanisms in mid-market organizations. They provide structure and create a tangible artifact that leadership can review. They also introduce latency.

On the day an inventory spreadsheet is updated, it may accurately reflect the environment. Within days, that accuracy begins to erode. New employees onboard. SaaS subscriptions expand. Azure resources are provisioned for temporary initiatives. License renewals auto-process. Security findings emerge.

Unless someone manually updates the file, the documented inventory gradually diverges from the actual environment state.

This divergence creates a subtle but meaningful risk. Leadership sees documentation and assumes currency. IT teams recognize that maintaining it requires time they often cannot spare. Over time, the gap between documented inventory and live infrastructure becomes both financially expensive and operationally risky.

Manual reconciliation is inherently reactive, capturing historical state rather than reflecting current conditions.

Continuous discovery changes that dynamic. Assets are identified automatically. Usage is reconciled on an ongoing basis. Vulnerability data aligns with live inventories. Instead of preparing for renewal meetings with static spreadsheets, teams operate from current intelligence.

For lean IT teams, this shift is not about sophistication. It is about reclaiming time and reducing uncertainty. When inventory reflects reality without manual intervention, ITAM moves from documentation maintenance to operational oversight.

Spreadsheets can still serve as reporting artifacts. They should not function as the primary source of truth.

What Proactive IT Asset Management Actually Looks Like

Proactivity is often described conceptually. In practice, for mid-market IT teams, it has concrete characteristics.

When leadership asks about software spend, the answer is drawn from a live operational view rather than a manually reconciled document. When vendors initiate renewal discussions, license exposure and utilization data are already understood. When vulnerability alerts surface, they are visible in the context of the full IT estate — SaaS applications, cloud workloads, endpoints, and servers — rather than as isolated findings.

The goal is not perfection but alignment between the current environment and real-time awareness.

Block 64’s model of unified IT visibility is designed for that mid-market reality. Rather than requiring wholesale system replacement, it consolidates SaaS, cloud, endpoint, and vulnerability data into a continuously updated view that overlays existing tools. Teams move from fragmented dashboards to a single operational lens without introducing enterprise-level complexity.

Block 64 gives mid-market IT teams the visibility and intelligence required to shift from reactive firefighting to controlled management, delivering insight in minutes without introducing enterprise-level complexity.

Get your first insight in 15 minutes.